New Foreign Affairs piece:

As part of our research at Georgetown University’s Center for Security and Emerging Technology, we examined thousands of publicly available PLA procurement requests published over the last three years. These documents reveal that China is urgently pushing the third phase of its modernization. The breadth of its efforts to integrate artificial intelligence into its military and the speed of its experimentation are striking. The PLA is prototyping AI capabilities that can pilot unmanned combat vehicles, detect and respond to cyberattacks, track seaborne vessels, and identify and strike targets on land, at sea, and in space. The Chinese military is also developing systems that ingest, analyze, and augment massive amounts of data to enhance tactical and strategic decision-making, as well as tools that create deepfake images and videos for disinformation campaigns.

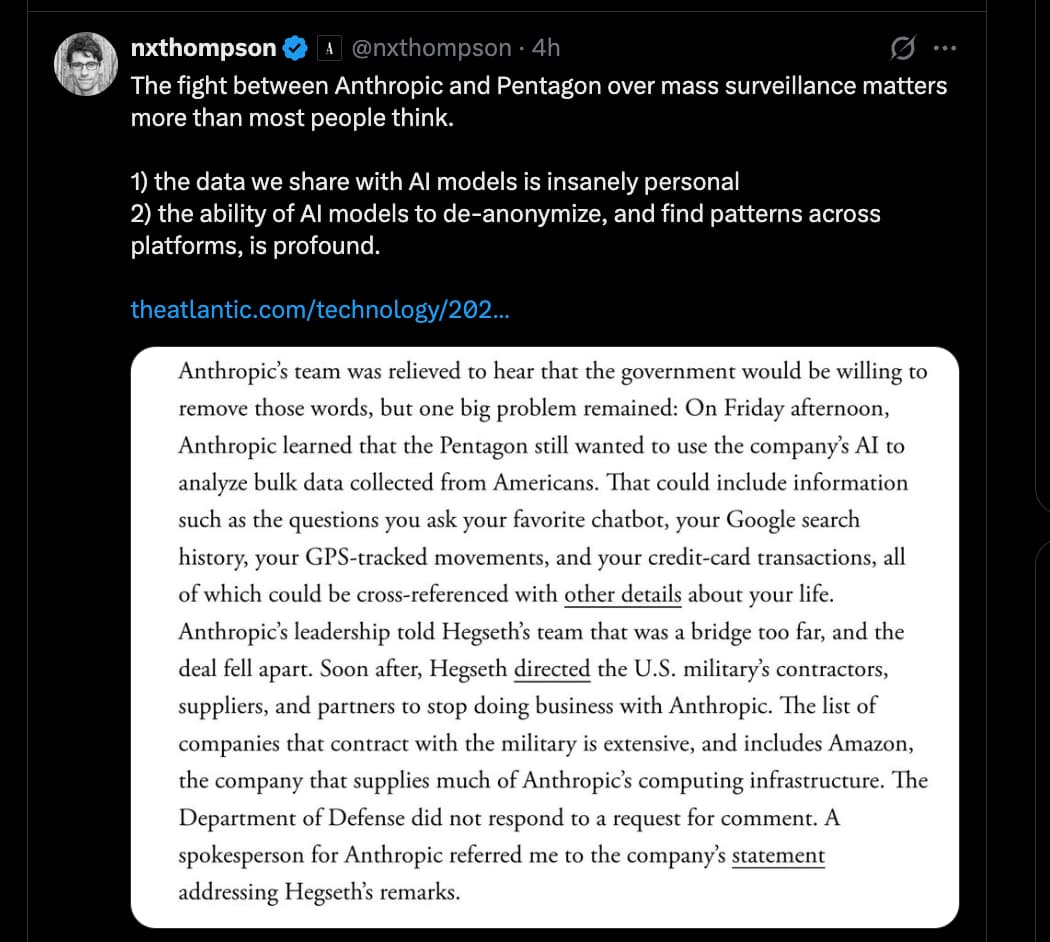

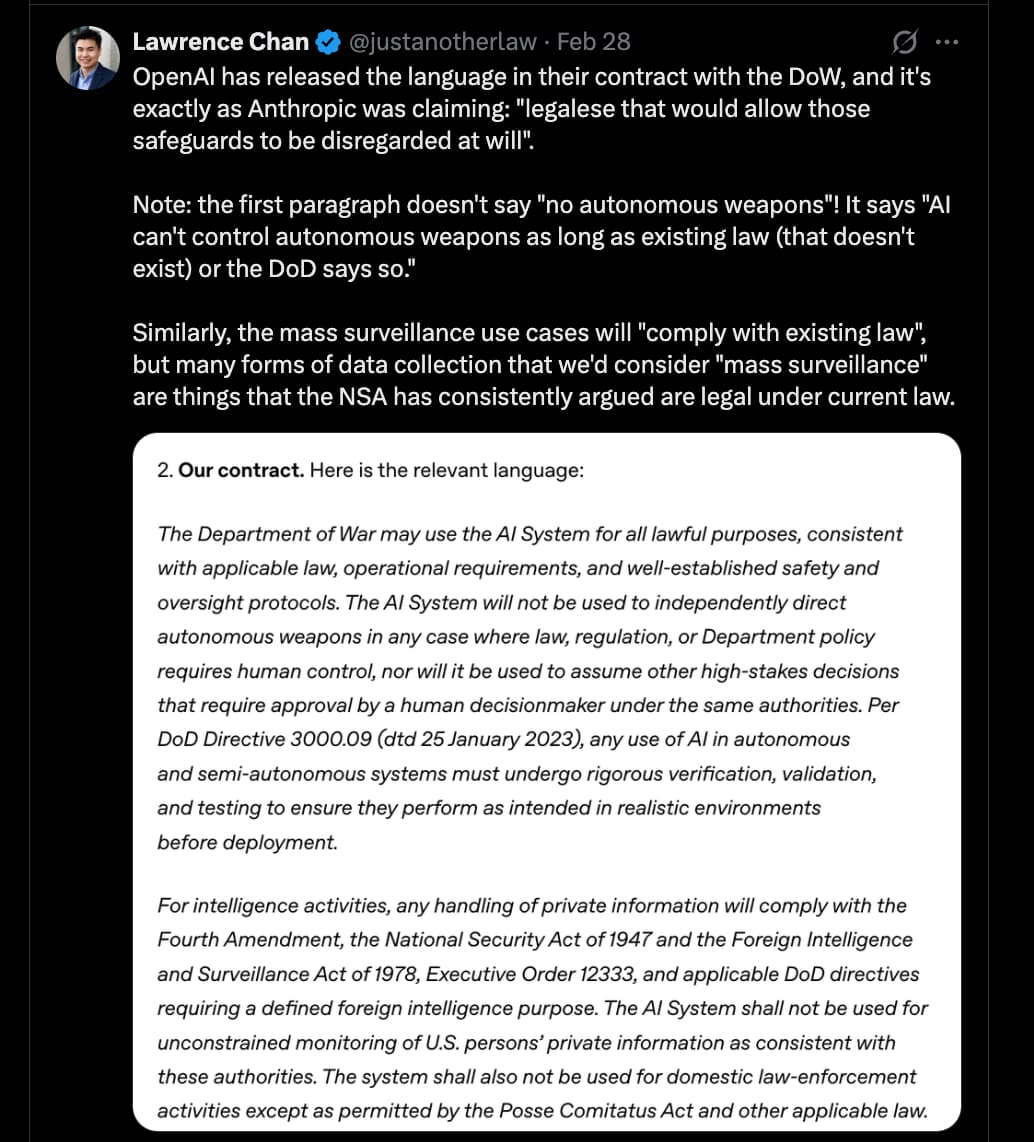

In short, the PLA is fostering an ecosystem for rapid AI development that connects novel research with frontline operations. The United States, meanwhile, has declared the AI company Anthropic a supply chain risk, effectively barring a leader in frontier AI from supporting the U.S. government. The U.S. military still holds critical advantages in computing power, technical talent, and operational experience. But to stay ahead of Beijing, Washington will need to carefully shepherd its advantages, prototype with greater urgency, and, perhaps most important, scale the AI systems that give it a battlefield advantage.

The DoW / Anthropic meltdown, as well as the use of autonomous weapons in the Russia-Ukraine war, signal to the China government that they need to work even faster and harder. Expect autonomous weapons to arrive on the battlefield in skirmishes all over the world very soon – sooner than people are expecting – and then with some of the more devastating ones used for deterrence, similar to nukes.

On the use of AI for domestic surveillance (not specifically by the DoW, but by the FBI, Department of Justice, and other agencies): it’s already being used to some degree, but we will see a dramatic increase in the coming year. Why? Because Trump et al fear a Democrat takeover following the midterm elections. They fear endless Congressional hearing and reductions in their power to do things that are kind of illegal (because ordinarily they need approval from Congress). This is why they are doing things like scooping up Fulton County Georgia 2020 election records (they could feed these into Claude and ask it to find even the most inconsequential piece of dirt), demanding Minnesota provide theirs, Gerrymandering in various states (or attempts; so far Indiana has resisted), possibly issuing an executive order to mess with the voting process (there is a working document, but Trump denies he will apply it), and several more things (some of which would apply more to elections in years to come, like the issuing of refugee status to white South Africans in the thousands, which would be like a repeat of how Cubans flooded into the country in the late 70’s under Carter and 80’s under Reagan).

If they wanted to prevent that Congressional takeover, it would be rational to apply advanced AI to do things that are legal, but where the law hasn’t yet caught up with the capabilities. e.g. they could claim that “everybody knows the voting process is riddled with fraud” (it isn’t), and then feed in voter rolls into Claude and ask it to “find the fraud”. It might come back with stuff like that John Doe had an unpaid legal debt, and therefore should be forbidden from voting, as some states have that requirement (they require all legal debts to be paid before voting). “See, I TOLD you there was fraud!”