Source: https://www.voronoiapp.com/technology/AI-Optimism-By-Median-Age--7470

Who wouldn’t like a cure to all or most diseases and a lifespan in health of 500 years? This will only be achievable with ASI. I hope AGI will be reached ASAP. Then the time to ASI will only be limited by compute and energy. Problems which the AGI would help figure out.

I’m among the enthusiastic optimists.

I’m in the middle right now… I can see upsides, and downsides (primarily for our children, and white collar workers between 25 and 50):

THE WORST-CASE FUTURE FOR WHITE-COLLAR WORKERS

The well-off have no experience with the job market that might be coming.

the labor market for office workers is beginning to shift. Americans with a bachelor’s degree account for a quarter of the unemployed, a record. High-school graduates are finding jobs quicker than college graduates, an unprecedented trend. Occupations susceptible to AI automation have seen sharp spikes in joblessness. Businesses really are shrinking payroll and cutting costs as they deploy AI. In recent weeks, Baker McKenzie, a white-shoe law firm, axed 700 employees, Salesforce sacked hundreds of workers, and the auditing firm KPMG negotiated lower fees with its own auditor. Two CNBC reporters with no engineering experience “vibe-coded” a clone of Monday.com’s workflow-management platform in less than an hour. When they released their story, Monday.com’s stock tanked.

Maybe algorithm-driven changes will happen slowly, giving workers plenty of time to adjust. Maybe white-collar types have 12 to 18 months left. Maybe the AI-related job carnage will be contained to a sliver of the economy. Maybe we should be more worried about a stock-market bubble than an AI-driven labor revolution.

But if white-collar layoffs cause a downturn, Washington might not be able to restore hiring and lift consumer spending as it has done before. Businesses wouldn’t need the skills workers possess. Firms wouldn’t want to hire the legions of accountants, engineers, lawyers, middle managers, human-resources executives, financial analysts, PR types, and customer-service agents they just laid off. (Writers would be fine, I choose to believe.) The United States would have a “structural” unemployment problem, as economists put it, not a “cyclical” demand problem.

Full article: THE WORST-CASE FUTURE FOR WHITE-COLLAR WORKERS (The Atlantic)

This has gotten a lot of coverage in the tech world and beyond this week:

Something Big Is Happening

Think back to February 2020.

If you were paying close attention, you might have noticed a few people talking about a virus spreading overseas. But most of us weren’t paying close attention. The stock market was doing great, your kids were in school, you were going to restaurants and shaking hands and planning trips. If someone told you they were stockpiling toilet paper you would have thought they’d been spending too much time on a weird corner of the internet. Then, over the course of about three weeks, the entire world changed. Your office closed, your kids came home, and life rearranged itself into something you wouldn’t have believed if you’d described it to yourself a month earlier.

I think we’re in the “this seems overblown” phase of something much, much bigger than Covid.

I’ve spent six years building an AI startup and investing in the space. I live in this world. And I’m writing this for the people in my life who don’t… my family, my friends, the people I care about who keep asking me “so what’s the deal with AI?” and getting an answer that doesn’t do justice to what’s actually happening. I keep giving them the polite version. The cocktail-party version. Because the honest version sounds like I’ve lost my mind. And for a while, I told myself that was a good enough reason to keep what’s truly happening to myself. But the gap between what I’ve been saying and what is actually happening has gotten far too big. The people I care about deserve to hear what is coming, even if it sounds crazy.

I should be clear about something up front: even though I work in AI, I have almost no influence over what’s about to happen, and neither does the vast majority of the industry. The future is being shaped by a remarkably small number of people: a few hundred researchers at a handful of companies… OpenAI, Anthropic, Google DeepMind, and a few others. A single training run, managed by a small team over a few months, can produce an AI system that shifts the entire trajectory of the technology. Most of us who work in AI are building on top of foundations we didn’t lay. We’re watching this unfold the same as you… we just happen to be close enough to feel the ground shake first.

But it’s time now. Not in an “eventually we should talk about this” way. In a “this is happening right now and I need you to understand it” way.

…

What this means for your job

I’m going to be direct with you because I think you deserve honesty more than comfort.

Dario Amodei, who is probably the most safety-focused CEO in the AI industry, has publicly predicted that AI will eliminate 50% of entry-level white-collar jobs within one to five years. And many people in the industry think he’s being conservative. Given what the latest models can do, the capability for massive disruption could be here by the end of this year. It’ll take some time to ripple through the economy, but the underlying ability is arriving now.

This is different from every previous wave of automation, and I need you to understand why. AI isn’t replacing one specific skill. It’s a general substitute for cognitive work. It gets better at everything simultaneously. When factories automated, a displaced worker could retrain as an office worker. When the internet disrupted retail, workers moved into logistics or services. But AI doesn’t leave a convenient gap to move into. Whatever you retrain for, it’s improving at that too.

Let me give you a few specific examples to make this tangible… but I want to be clear that these are just examples. This list is not exhaustive. If your job isn’t mentioned here, that does not mean it’s safe. Almost all knowledge work is being affected.

Legal work. AI can already read contracts, summarize case law, draft briefs, and do legal research at a level that rivals junior associates. The managing partner I mentioned isn’t using AI because it’s fun. He’s using it because it’s outperforming his associates on many tasks.

Financial analysis. Building financial models, analyzing data, writing investment memos, generating reports. AI handles these competently and is improving fast.

Writing and content. Marketing copy, reports, journalism, technical writing. The quality has reached a point where many professionals can’t distinguish AI output from human work.

Software engineering. This is the field I know best. A year ago, AI could barely write a few lines of code without errors. Now it writes hundreds of thousands of lines that work correctly. Large parts of the job are already automated: not just simple tasks, but complex, multi-day projects. There will be far fewer programming roles in a few years than there are today.

Medical analysis. Reading scans, analyzing lab results, suggesting diagnoses, reviewing literature. AI is approaching or exceeding human performance in several areas.

Customer service. Genuinely capable AI agents… not the frustrating chatbots of five years ago… are being deployed now, handling complex multi-step problems.

A lot of people find comfort in the idea that certain things are safe. That AI can handle the grunt work but can’t replace human judgment, creativity, strategic thinking, empathy. I used to say this too. I’m not sure I believe it anymore.

The most recent AI models make decisions that feel like judgment. They show something that looked like taste: an intuitive sense of what the right call was, not just the technically correct one. A year ago that would have been unthinkable. My rule of thumb at this point is: if a model shows even a hint of a capability today, the next generation will be genuinely good at it. These things improve exponentially, not linearly.

Full article: Something Big Is Happening (Matt Shumer)

Nate Silver writes “The Singularity won’t be gentle”:

One of the “additional points on why… the political impact of AI is probably understated” that I found interesting (and amusing) was this:

Cluelessness on the left about AI means the political blowback will be greater once it realizes the impact . My post last January was partly a critique of the political left. We have some extremely rich guys like Altman who claim that their technology will profoundly reshape society in ways that nobody was necessarily asking for. And also, conveniently enough, make them profoundly richer and more powerful! There probably ought to be a lot of intrinsic skepticism about this. But instead, the mood on the left tends toward dismissing large language models as hallucination-prone “chatbots”. I expect this to change at some point.

If you dislocate 20% of the white collar workforce in 18 to 24 months, I suspect there would be repercussions. We live in interesting times.

From Nate silver’s post:

Let me add a few additional points on why I think the political impact of AI is probably understated:

- “Silicon Valley” is bad at politics. If nothing else during Trump 2.0, I think we’ve learned that Silicon Valley doesn’t exactly have its finger on the pulse of the American public. It’s insular, it’s very, very, very, very rich — Elon Musk is now nearly a trillionaire! — and it plausibly stands to benefit from changes that would be undesirable to a large and relatively bipartisan fraction of the public. I expect it to play its hand in a way that any rich “degen” on a poker winning streak would: overconfidently and badly.

So when Silicon Valley leaders speak of a world radically remade by AI, I wonder whose world they’re talking about. Something doesn’t quite add up in this equation. Jack Clark has put it more vividly: “People don’t take guillotines seriously. But historically, when a tiny group gains a huge amount of power and makes life-altering decisions for a vast number of people, the minority gets actually, for real, killed.”

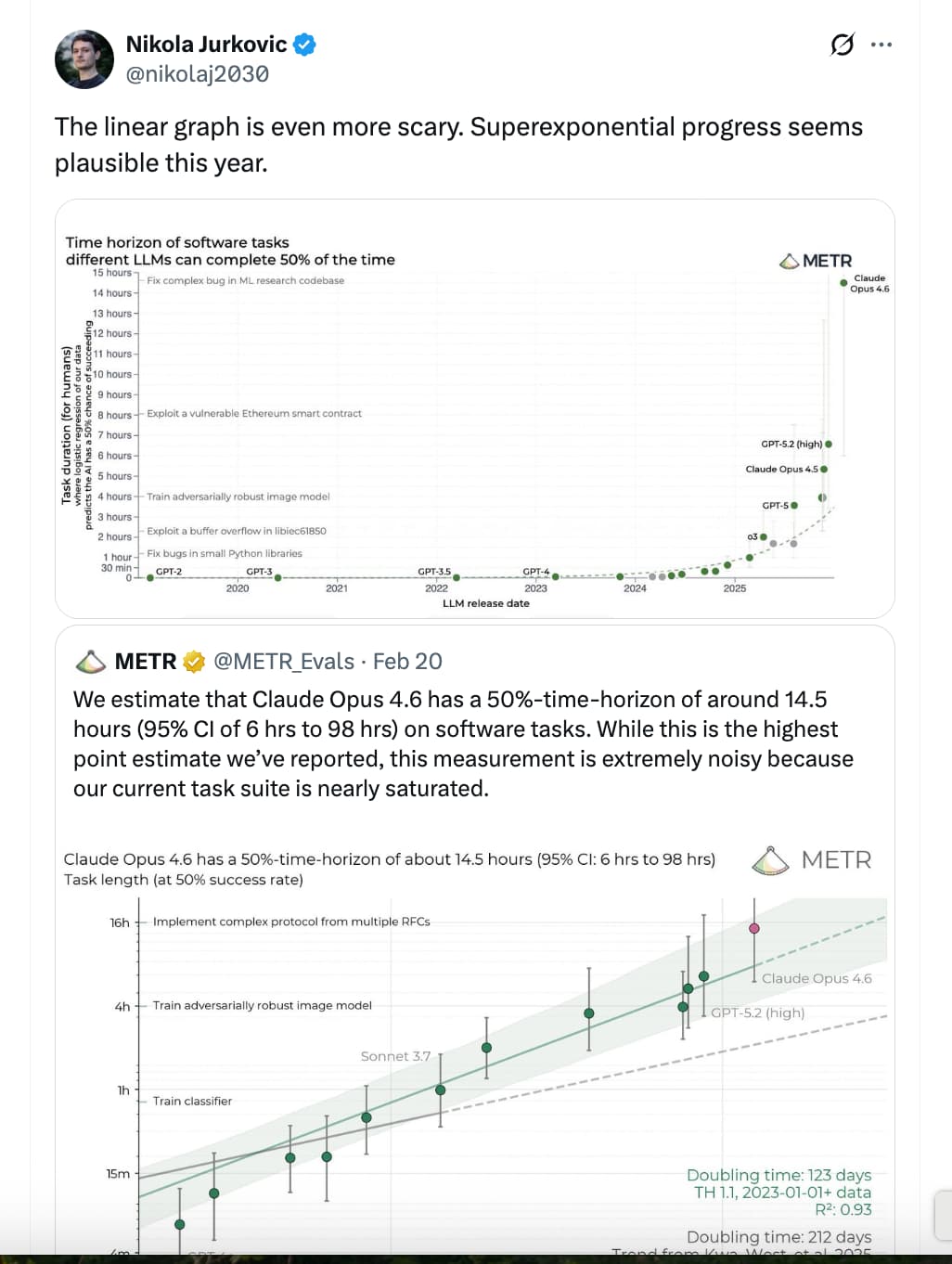

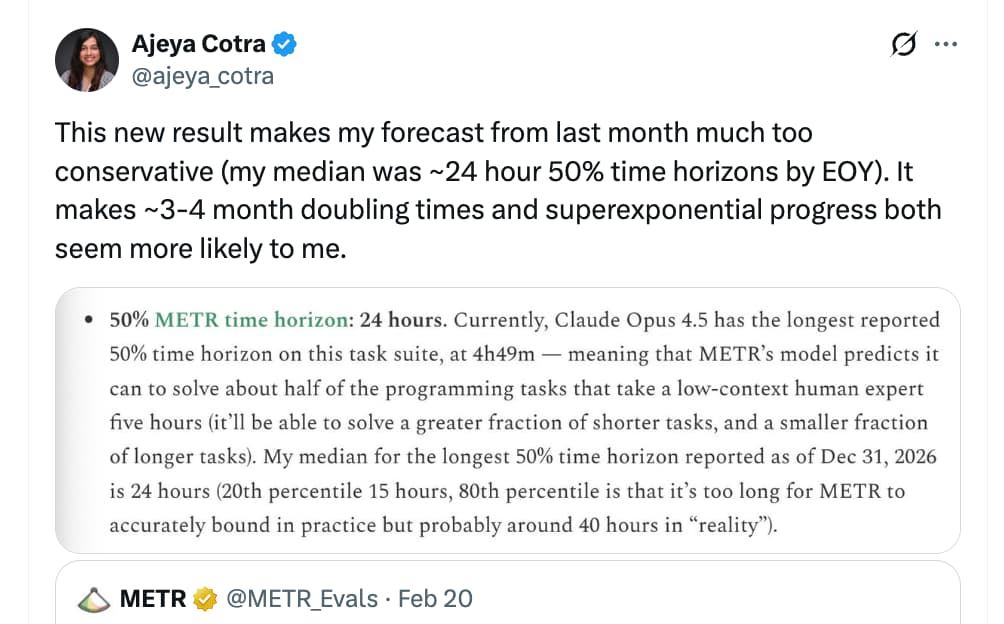

https://x.com/i/status/2024925581146722806

It looks like… hard takeoff…

Even… This… year…

https://x.com/i/status/2024924484688621769

but see michael trazzi

What if our AI bullishness continues to be right…and what if that’s actually bearish?

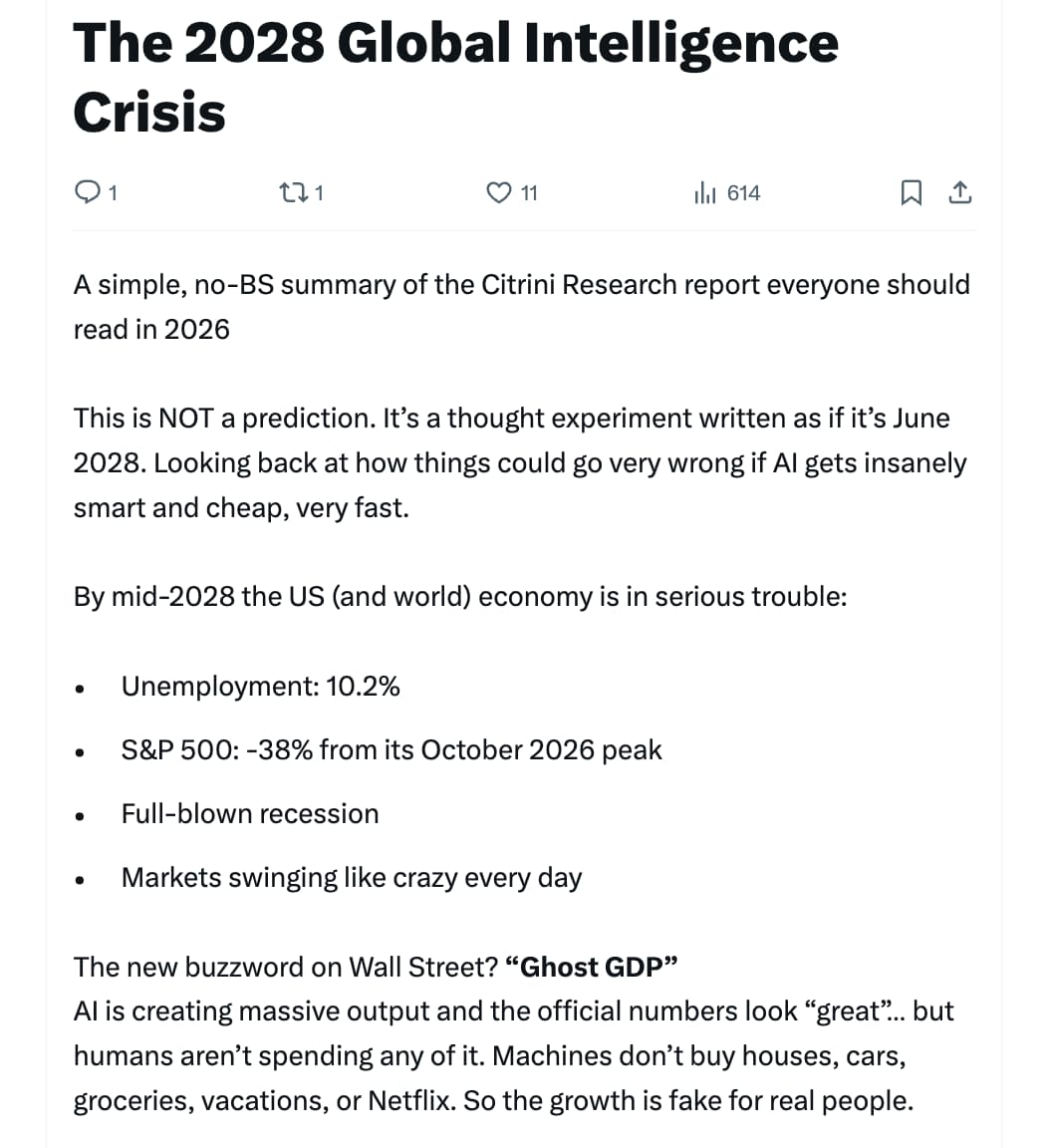

What follows is a scenario, not a prediction. This isn’t bear porn or AI doomer fan-fiction. The sole intent of this piece is modeling a scenario that’s been relatively underexplored. Our friend Alap Shah posed the question, and together we brainstormed the answer. We wrote this part, and he’s written two others you can find here.

Hopefully, reading this leaves you more prepared for potential left tail risks as AI makes the economy increasingly weird.

This is the CitriniResearch Macro Memo from June 2028, detailing the progression and fallout of the Global Intelligence Crisis.

Full write-up:

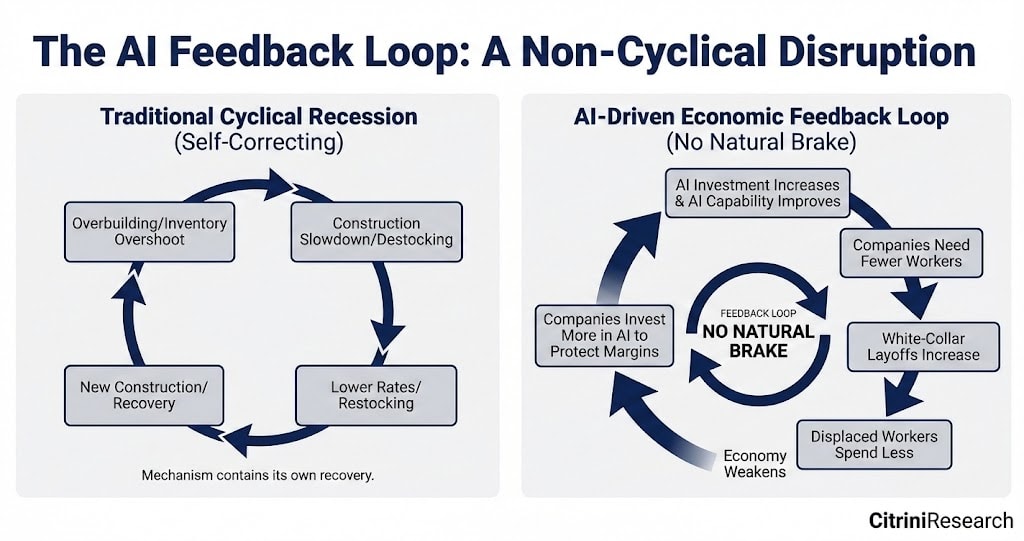

AI got better and cheaper. Companies laid off workers, then used the savings to buy more AI capability, which let them lay off more workers. Displaced workers spent less. Companies that sell things to consumers sold fewer of them, weakened, and invested more in AI to protect margins. AI got better and cheaper.

A feedback loop with no natural brake.

some speedbumps on the path to tech nirvana:

https://www.axios.com/2026/02/23/ai-defense-department-deal-musk-xai-grok

Musk’s xAI and Pentagon reach deal to use Grok in classified systems

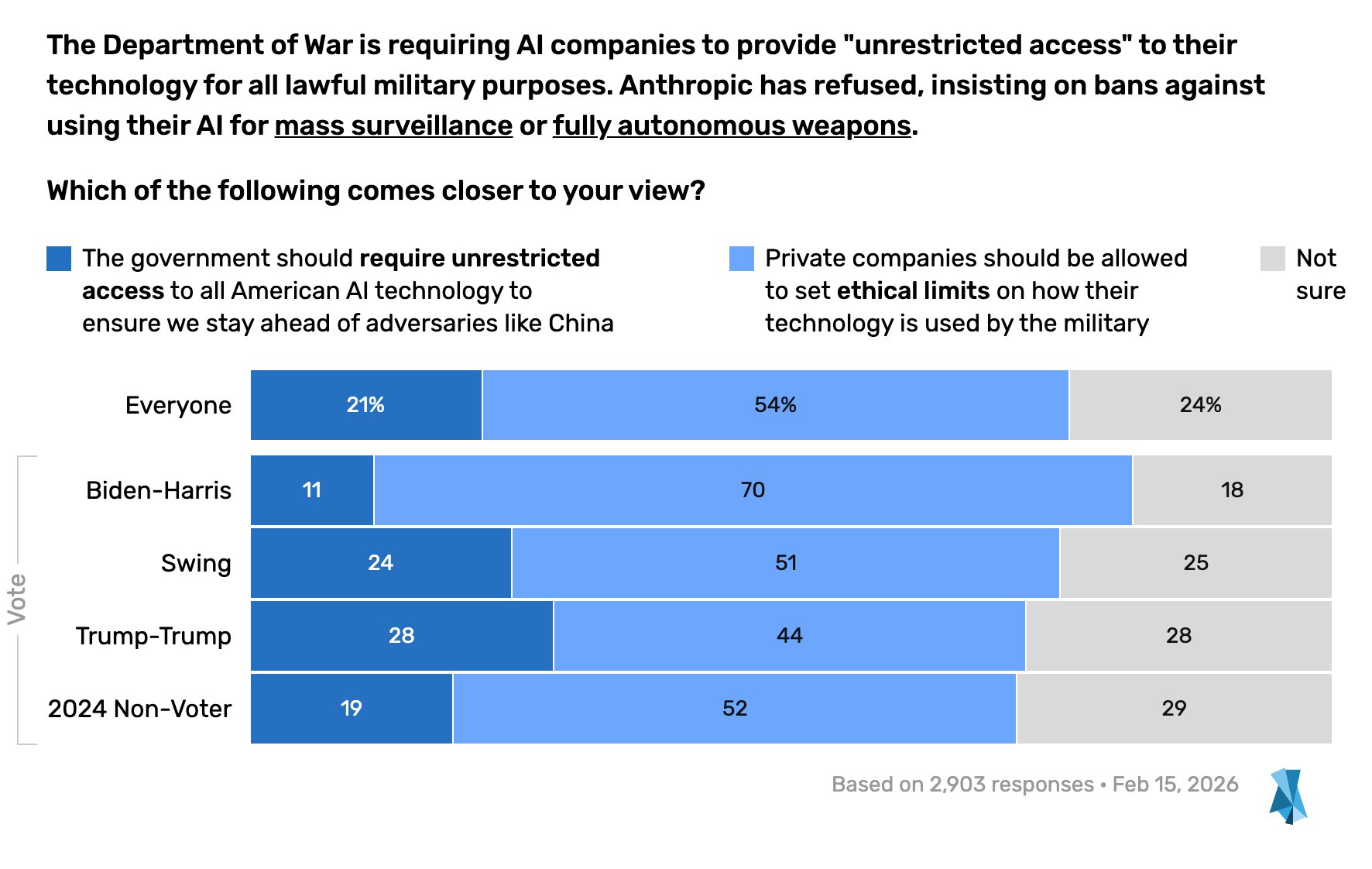

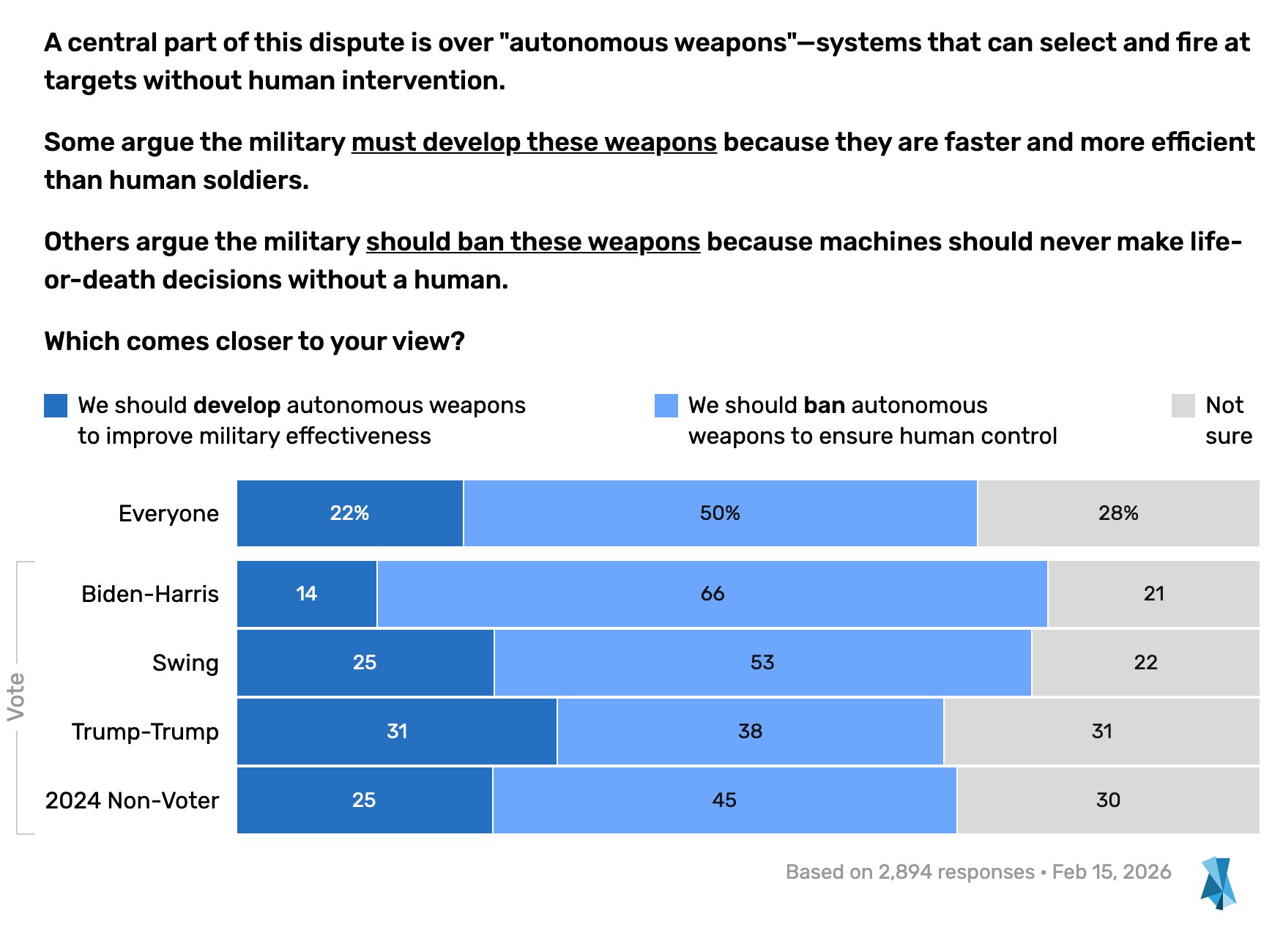

Anthropic has refused the Pentagon’s demand that they make Claude available for `all lawful purposes’; insisting in particular on blocking its use for the mass surveillance of Americans and the development of fully autonomous weapons.

However, it sounds like opening up Grok to “all lawful use” would enable it to be used in these ways.

Imagine, for example, a newspaper like The New York Times writes a story with an intelligence leak. The Trump admin might suspect a member of the executive branch – or perhaps a member of Congress with access to the info – was responsible. So, they prompt, “Grok, who in Congress or in the government would be the most likely leaker of this information? Do some deep research, looking through all the classified documents they have access to, all the connections they have, all the social media and media comments they have made, etc. Do a total information awareness sweep to help us ferret-out this treasonous mole!”

…

Unrelated, but here is a x post that is in agreement with my own thinking:

https://x.com/nickcammarata/status/2025126183940358432#m

confused why this won’t go vertical even if intelligence doesn’t. when i was learning to code things would get too messy to continue, then at some point i could just… code. give me a cave & 5yrs and I’ll keep coding. I didn’t get exponentially smarter, just crossed a threshold

Indeed… phase transitions are possible not only in human performance, but also in machine performance at METR tasks.

A good overview of the Anthropic/ DoW conflict:

Anthropic’s Pentagon Problems

Anthropic is feuding with the U.S. military, despite their massive $200 million contract. The company says that its AI model, Claude, cannot be used for weapons development or surveillance. The Pentagon is pushing back against those limitations. WSJ’s Amrith Ramkumar joins Jessica Mendoza to explain why the Department of Defense is now threatening to label Anthropic a supply chain risk.

AI has no morality or need for a reputation.

Hence any systems in which it operates need strict limits.

Details about the meeting between Hegseth and Dario Amodei today:

https://www.axios.com/2026/02/24/anthropic-pentagon-claude-hegseth-dario

Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei until Friday evening to give the military unfettered access to its AI model or face harsh penalties, Axios has learned.

…

“The only reason we’re still talking to these people is we need them and we need them now. The problem for these guys is they are that good,” a Defense official told Axios ahead of the meeting.

…

In the room: In a sign of how seriously the Pentagon is taking this dispute, Hegseth was joined in the meeting by Deputy Secretary Steve Feinberg, Under Secretary for Research and Engineering Emil Michael, Under Secretary for Acquisition and Sustainment Michael Duffy, Hegseth’s chief spokesperson Sean Parnell and general counsel Earl Matthews, the Pentagon’s top lawyer.

Advanced AI models appear willing to deploy nuclear weapons without the same reservations humans have when put into simulated geopolitical crises.

Kenneth Payne at King’s College London set three leading large language models – GPT-5.2, Claude Sonnet 4 and Gemini 3 Flash – against each other in simulated war games. The scenarios involved intense international standoffs, including border disputes, competition for scarce resources and existential threats to regime survival.

The AIs were given an escalation ladder, allowing them to choose actions ranging from diplomatic protests and complete surrender to full strategic nuclear war. The AI models played 21 games, taking 329 turns in total, and produced around 780,000 words describing the reasoning behind their decisions.

In 95 per cent of the simulated games, at least one tactical nuclear weapon was deployed by the AI models. “The nuclear taboo doesn’t seem to be as powerful for machines [as] for humans,” says Payne.

Full article: AIs can’t stop recommending nuclear strikes in war game simulations

It’ll fake up the dark red line, then plunge toward the light pink.