For years, the resistance to artificial intelligence (AI) looked manageable. There were academics writing open letters, Hollywood writers striking over contract language, the think-tank reports warning of job displacement. Tech executives nodded, pledged responsibility, and kept building as fast as they could.

Then someone threw a firebomb at Sam Altman’s house.

On Friday, a 20-year-old man named Daniel Moreno-Gama traveled from Spring, Texas, to San Francisco’s Pacific Heights neighborhood and hurled an incendiary device at the gate of OpenAI CEO Sam Altman’s $27 million home, igniting a fire on the exterior gate. No one was injured, but Moreno-Gama was arrested approximately an hour later outside OpenAI’s headquarters — where he was allegedly trying to shatter the building’s glass doors with a chair and threatening to burn the facility to the ground. He is now facing state charges of attempted murder and federal charges that could include domestic terrorism.

Authorities afterward found a manifesto warning of humanity’s “extinction” at the hands of AI and expressing an urge to commit murder, and a disturbing personal Substack. The next morning, Altman posted a plea for sanity on his X account, attaching a photo of his husband and young child. “Normally we try to be pretty private, but in this case I am sharing a photo in the hopes that it might dissuade the next person from throwing a Molotov cocktail at our house, no matter what they think about me,” Altman wrote.

To no avail. Early Sunday morning, two more Gen-Zers, one 23 and the other 25, were arrested after shooting a gun near the Russian Hill home of Sam Altman (it is unclear at this time if the shooting was targeted).

After the attacks, pundits and professional opinion-havers pointed fingers in every direction: at the StopAI crowd, a radical group that has staged protests and flash-subpoena-deliveries to try to halt the pace of artificial intelligence altogether; at the news media, which has critically covered Altman and his peers; and at Altman himself, for stoking fear about AI displacement with his sometimes-apocalyptic rhetoric. Among the older commentariat, however, the dominant note was remorse and well wishes for Altman.

But in the younger, less formal corners of the internet, like Instagram and TikTok, the comments under every post about the attacks generally run in one direction. “He’s not scared enough.” “Based do it again.” “FREE THAT MAN HE DID NOTHING WRONG.” “Finally some good news on my feed.”

Those comments are ugly, but for those who’ve been paying attention to the anti-AI backlash build, not shocking. At all.

Gen Z is not a fan of AI. At all

The middle distribution of Gen-Z’s feelings about AI range from apprehension to downright hatred. Despite the fact that more than half of Gen Z living in the U.S. uses AI regularly, according to a recently released Gallup poll, less than a fifth feel hopeful about the technology. About a third says the technology makes them angry. And nearly half say it makes them afraid.

Gallup’s own senior education researcher, Zach Hrynowski, blamed the bad vibes at least partially on the dwindling job market. The oldest Zoomers, he told Axios, are the angriest, as they are “acutely aware” of the ability of a technology to transform cultural norms without a second thought, unlike a Gen Xer who is trained to see new technology as toys and are still “playing around with AI.”

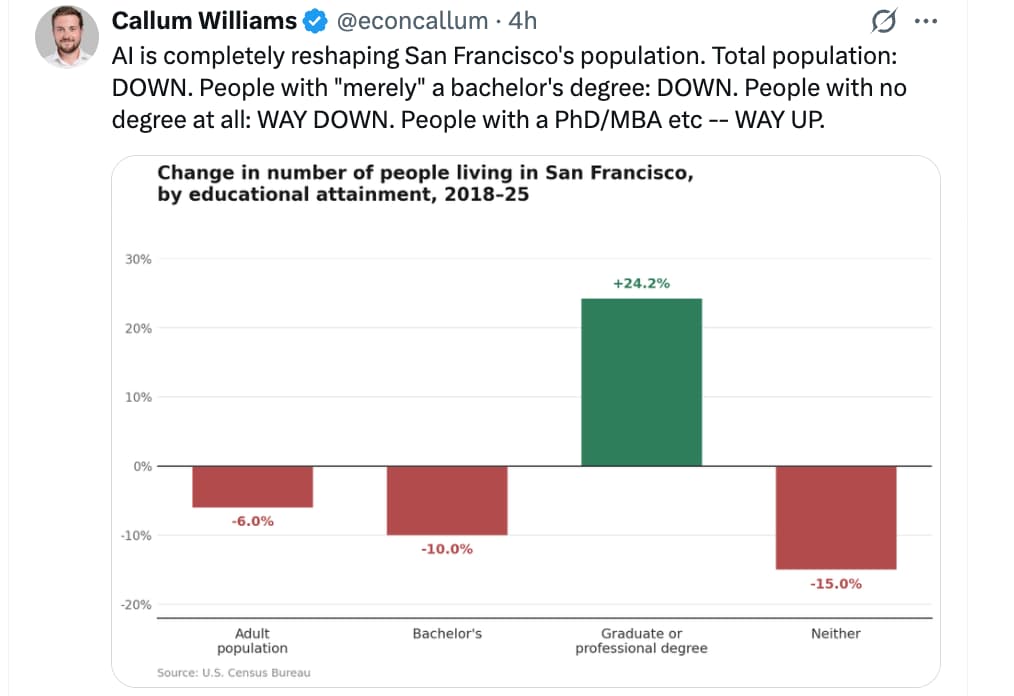

Indeed, the job prospects for the recently graduated Gen-Z are abysmal; Bloomberg just reported that 43% of young graduates are “underemployed,” meaning taking on jobs that require less education than they have.

But that can’t explain all of the vitriol. Perhaps some of it is the yawning gap beween promise and reality, symbolized by Altman himself. The OpenAI CEO has suggested that AI will usher in an era of “universal basic compute,” that people will barely need to work, that the future will be almost frictionless. That isn’t happening as of 2026.

Instead, inflation remains stubbornly untamable, as it has throughout the decade; consumers have never felt worse about their financial state, and Gen Z feels like they’re entering a “starter economy” without plentiful jobs or affordable homes. And so there’s a real mismatch, as Alex Hanna, a professor and researcher who studies the social impacts of AI, put it, “between consumer confidence and people’s pocketbooks and budgets, and what the technologists and the AI companies say the future is supposed to look like.”

Data center backlash

This is not just a Gen Z problem, either. In the American heartland, data centers are being proposed at a pace that local communities never anticipated and for which they were never asked permission, and they’re increasingly pushing back.

The numbers are serious. According to a report from 10a Labs’ Data Center Watch, at least $18 billion worth of data center projects have been blocked and another $46 billion delayed over the past two years due to local opposition. At least 142 activist groups across 24 states are now actively organizing to block data center construction and expansion. A Heatmap Pro review of public records found that 25 data center projects were canceled following local pushback in 2025 alone, four times as many as in 2024, with 21 of those cancellations occurring in the second half of the year as electricity costs grew.

The concerns driving this resistance are less about existential AI risk and more about typical kitchen-table complaints; communities consistently cite higher utility bills, water consumption, noise, impacts on property values, and green space destruction as their primary objections. Water use is mentioned as a top concern in more than 40% of contested projects, according to a Heatmap Pro review of public records.

Meanwhile, Hanna noted, companies keep lording over the threat of AI replacing workers as “leverage.” She added, “Employers are making room for AI investments. They want to show that they can lay off people and do what they’re currently doing with a decrease in headcount.”