I found Claude’s Soul document to be interesting:

My major concern with all of these is the total disregard for privacy.

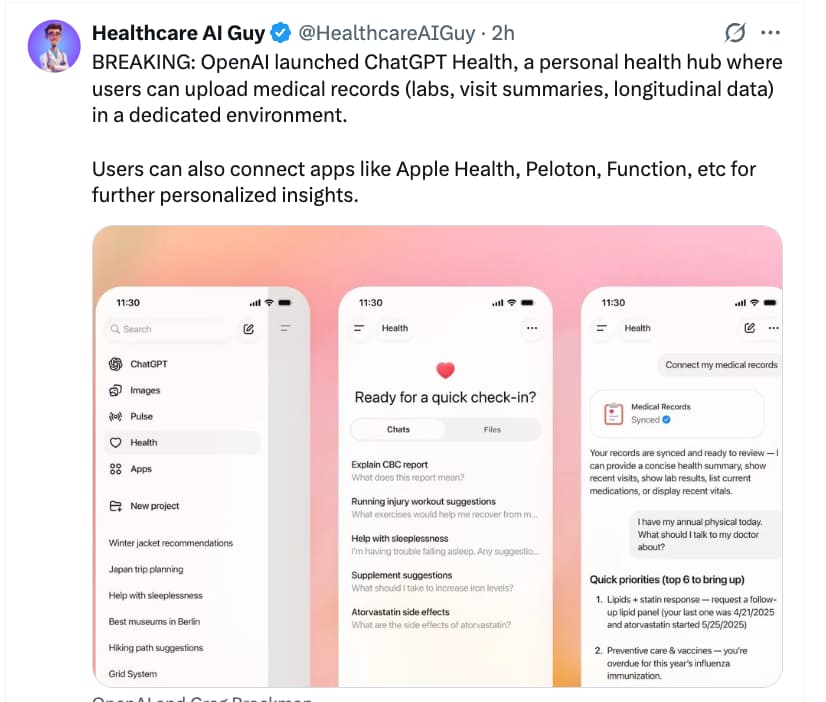

If you’re going to give OpenAI access to all your medical records, I’m sure ChatGPT will do an amazing job of analysing them and explaining things - especially for those of us who are not as “enthusiastic” as members of this forum. The models are very good at breaking down results and explaining them.

However - what is the cost of doing so? One is that your personal data becomes training data for more AI models. One problem is that the companies are simply running out of legitimate human-created training data, and they pay big bucks for access to data now. That’s why they love you to upload new original writing, scientific grant proposals, unpublished papers, your kids homework, financial reports, images etc. With patients consenting to share medical records that is a new and massive stream of valuable data for the companies.

Secondly, what about privacy? There are many implications here, because we know how dirty these corporations can be. After all, all of the AI companies have already committed mass copyright infringement, and scraped websites for content which didn’t belong to them. There are also all sorts of other people who would love to get their hands on those data. Imagine the treasure trove it would be for advertising agencies, insurance companies, banks, mortgage lenders etc if they could access your health data. Hell, I’m sure the government would love to know everybody’s ChatGPT history. Imagine how much crime they could uncover of people asking how to hide their crypto profits, avoid taxes etc. IMO, if you provide the information, it will eventually make its way to those people.

The point is - these companies are offering a very good service, which is super convenient. But I advise everybody not to lose sight of the bigger picture and long-term implications.

Personally, I am becoming very interested in local, offline models which can run on your own devices. There are several good models out there now, and some are specialised in medical knowledge. If you don’t have the compute power at home, some cloud GPU services are available - not perfect, but at least you’re not directly sending your most personal data to OpenAI.

Dose of uncertainty: Experts wary of AI health gadgets at CES

Health tech gadgets displayed at the annual CES trade show make a lot of promises. A smart scale promoted a healthier lifestyle by scanning your feet to track your heart health, and an egg-shaped hormone tracker uses AI to help you figure out the best time to conceive.

Tech and health experts, however, question the accuracy of products like these and warn of data privacy issues — especially as the federal government eases up on regulation.

The Food and Drug Administration announced during the show in Las Vegas that it will relax regulations on “low-risk” general wellness products such as heart monitors and wheelchairs. It’s the latest step President Donald Trump’s administration has taken to remove barriers for AI innovation and use. The White House repealed former President Joe Biden’s executive order establishing guardrails around AI, and last month, the Department of Health and Human Services outlined its strategy to expand its use of AI.

You can turn off OpenAI training on your data. It’s not what’s uploaded that’s valuable, it’s the entire conversation. After all they are producing conversations in some sense, rather than a new generation of what was uploaded.

Since most health data is digital, you should assume in my opinion that it’s already public in some sense.

RAM prices are crazy now, but you really could use an old gaming pc and upgrade the ram then run gpt-oss with 120 billion parameters well. It’s really chatgpt at home.

And the trend continues… but as seems to be the trend, Anthropic actually does it with some safeguards, like HIPAA oriented infrastructure (though what that means exactly is a little unclear to me).

Livestream from 1 hour ago, life sciences and healthcare with Dario Amodei for ~15 min after 5 min mark.

Wow, his sister and co-founder Daniela had her second child a few months ago, she had an infection during pregnancy and many fancy doctors said it was a viral infection. She got a second opinion from Claude who suggested it was bacterial and that she needed antibiotics within 48 hrs or it would go systemic so she took them, and further testing showed Claude was right (11:40 mark).

Rapamycin is mentioned at the 48 min mark by David Fajgenbaum in the livestream, co-founder and president of everycure:

Relatively brief and readable article. (No need to ai summarize) Stanford has lots of sleep data to feed their AI.

"“The most information we got for predicting disease was by contrasting the different channels,” Mignot said. Body constituents that were out of sync — a brain that looks asleep but a heart that looks awake, for example — seemed to spell trouble."

NEW: ARISE, a Stanford–Harvard network of clinicians and researchers, just published the State of Clinical AI 2026.

Here are the major takeaways:

-

“Superhuman” results are real but fragile.

AI can match or beat clinicians on narrow, well-defined test cases. But small changes that introduce uncertainty or ambiguity cause sharp performance drops. -

Uncertainty remains the core weakness.

When information is incomplete, evolving, or ambiguous, AI systems struggle. They often commit confidently to wrong answers rather than expressing uncertainty. -

AI shines where scale beats judgment.

The strongest evidence is in prediction at scale: early warning systems, risk forecasting, disease trajectories, and population-level insights that humans cannot compute manually. -

Most clinical AI studies don’t resemble real care.

Nearly half rely on exam-style questions. Very few use real patient data, measure uncertainty, or test fairness. This limits how much results translate to practice. -

Realistic evals reveal useful failure modes.

Realistic tests like simulated EHRs and long patient interactions expose how AI actually fails, such as losing context, missing updates, or committing too early to wrong conclusions. -

AI works best as a teammate, not replacement.

Across imaging, diagnostics, and treatment planning, clinician + AI outperforms either alone when integration is done well. -

Poor integration can make decisions worse.

Over-reliance, automation bias, and reduced vigilance are real risks. In some cases, clinicians performed worse with AI than without it. -

Patient-facing AI scales fastest and carries unique risk.

These tools expand access and engagement, but operate without real-time professional oversight. Confidence without context is especially dangerous. -

Outcomes matter more than engagement.

Patient-facing AI is often evaluated on simulations or usage metrics, not whether it improves health, reduces errors, or speeds appropriate escalation. -

The field is shifting from capability to evidence.

The next phase is not better demos but prospective, real-world trials that show when AI actually improves care and when it does not.

This aligns with my experience after testing various AI platforms in areas where I have done a lot of research and have good competence in. AI is useful in some contexts, but generally tends to be highly unreliable in unpredictable ways. I think one should be very, very cautious in relying on AI for healthcare decisions.

No, not like that. Admittedly, I usually only do “attempt1”, and rarely do “attempt2” or more, unless it’s a followup query asking to incorporate some paper the first AI missed. My approach is also not conversational. However, I do very tight and very detailed constraints - asking to focus on specific MOA, incorporate specific papers, avoid some sources (articles without citations etc.), focus on high credibility, exclude certain presumptions etc. So my query is very detail oriented. I figure if the AI platform fails here, there’s little point in my repeating an ask that I’ve already specified in my first attempt. It failed - my confidence that it won’t fail again craters.

Research from last year support that multi-turn conversations aren’t actually good anyway for certain tasks, maybe it’s fixed now, though I admit I haven’t read the paper but simply believe it (at least then):

Our experiments confirm that all the top open- and closed-weight LLMs we test exhibit significantly lower performance in multi-turn conversations than single-turn, with an average drop of 39% across six generation tasks.

In simpler terms, we discover that when LLMs take a wrong turn in a conversation, they get lost and do not recover.